Synchronizing your game to a song

Hi there, I’m Kristijan Trajkovski, the programmer from Dark-1.

As you may or may not know, we’re working on Odium: To the core, which is a single-button, music-based, floating game where you need to follow a level which is synchronized with our original soundtrack. Traps, gates, coins and other interactive cosmetics in the levels trigger some kind of function at certain times during the soundtrack. In this post, I’ll explain how this works, and how you could use it in your game.

In our very first game jam prototype on the Global Game Jam Skopje back in 2013, we had an animation where we just added events that were changing the speed at certain keyframes, and we played the animation every time the game started. As you may assume, this was neither easy nor practical. We only had two key points on that prototype, which gave a good illusion that the game was controlled by music, but it was fairly easy to see through if you tried to play the game multiple times.

After the game jam we decided to make Odium into a full game. We knew one way of synchronizing the game that unfortunately didn’t work. We looked through the asset store of Unity for an asset that could do what we needed: easily trigger events at certain times during a track, optionally being able to have some curves we could define for the player’s speed on top of an audio file’s texture but couldn’t find anything of the sort.

I made a small script where you could type down timestamps in seconds and a message for each, it was sending messages to the object when a certain time was reached in the track, and it worked for a short while, but we decided that it would be better if speed changes were not instantaneous. We also wanted to add coins synced to the snare drum in the tracks, and typing down each one manually was a tedious task.

DarkACE

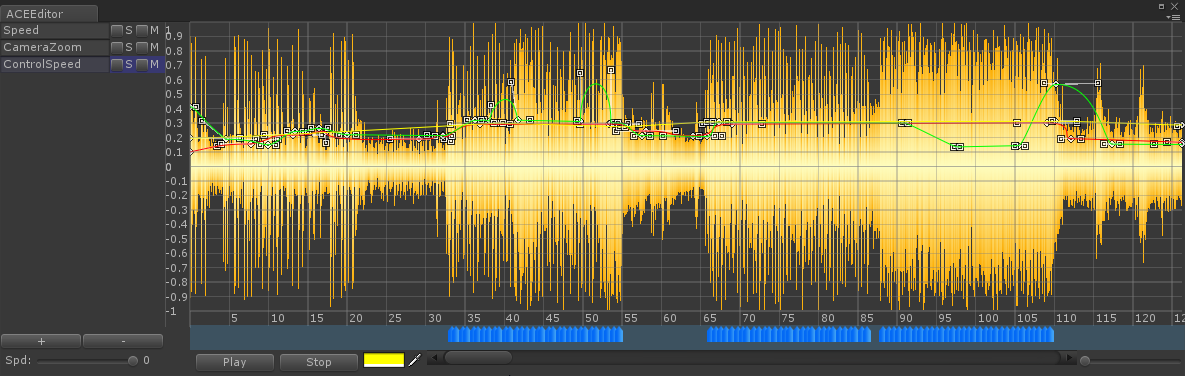

I decided to make an asset that’ll ease our workflow and that could be sold on Unity’s asset store. I rolled up my sleeves and started working. What I imagined was a list of curves on the left side, each with its own name and color that can be changed, and a track’s texture to the right, which can be scrolled and zoomed and has an overlay of the curves on top of it. Below it I added a track for events, similarly to the one on top of the animator. I worked for a few days on it, and what came out of it was this:

It’s an editor which is coupled with a component I called AudioEvents which contained all the data for the curves and events, had utility functions to query the current value of curves and also triggered the events, sending messages the object it’s attached on.

At this point, this is perfect for our needs, it’s the core of our level design process and still does the job quite well, even several years after it was initially made.

If you want to grab a copy, you can find it on Unity’s Asset Store Here

Besides DarkACE, we’ve also made some other developments to help us do a better audio synchronization for other things:

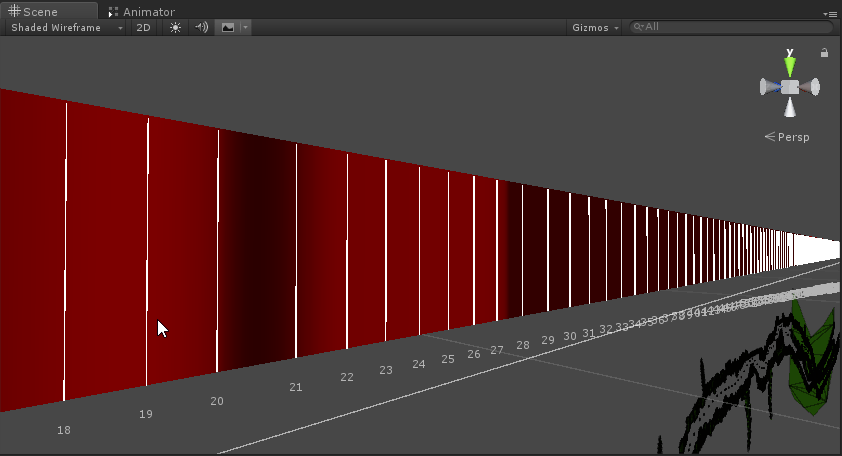

We have a level timeline, generated by using the speed curve in the editor, it looks like this:

The colors (from red to black) represent the player’s speed at the given time, where black is slow and red is fast. We have timestamps marked (note the distance between 20 and 21 in the image is bigger because the player is slower), so even if something needs to be changed or moved, or a new trap should be added, we easily know where to look.

Traps in the game are mostly activated directly at certain timestamps. We have a trap activator script which takes all the traps in the level, sorts them by activation time, and then activates each one of them at the right time. Having them sorted greatly improves performance, as only one check is made at a time, for the next trap.

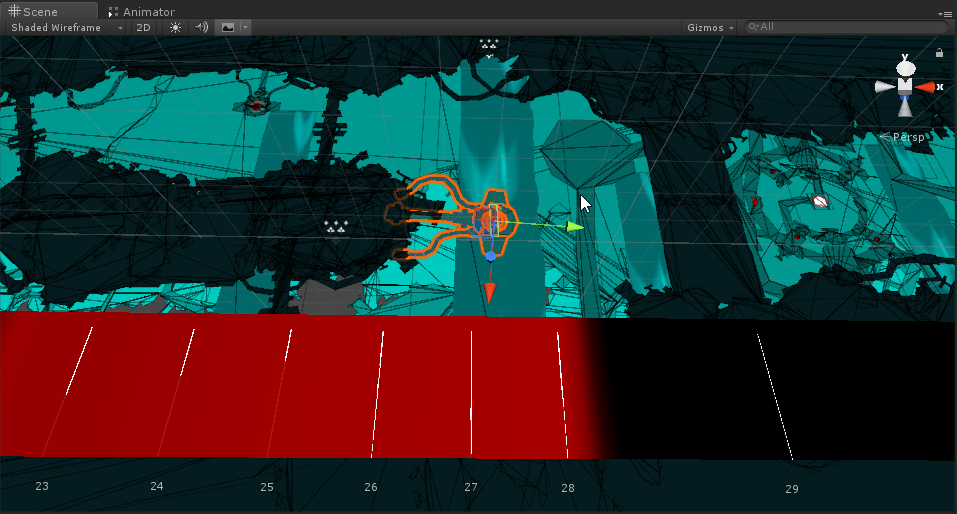

Some traps should only be activated if a player passes through a certain area. For these, we use trigger colliders, set to the right place using the timeline:

So, that’s an overview of audio synchronization in our game, i hope you find this insight useful.